Deciding to measure performance—whether by test scores or clinical indicators—is step one to improve what you’re measuring. So when the Centers for Medicare and Medicaid Services (CMS) awarded $8.85 million in incentives to hospitals that showed measurable care improvements in 2005, it was no surprise that industry stakeholders took notice. Top decile hospitals received a 3% bonus, while second decile winners received 1%; bonuses were based on the achievement of specific clinical quality indicators. CMS improved care quality in five targeted clinical areas by the percentages indicated: community-acquired pneumonia (CAP) by 10%, heart failure by 9%, hip/knee replacement and coronary artery bypass graft (CABG) by 5% each, and acute myocardial infarction (AMI) by 4%.

CMS’ core measures project encourages winners to tout their achievements to consumers, recruitable physicians, payers, and anyone else who will listen. Like any program based on achieving scores rather than identifying the processes necessary to achieve success, though, insiders can speculate about what variables are responsible for positive outcomes. In the case of CMS’ core measures, if hospitalist stakeholders can demonstrate that having hospitalists increases a hospital’s CMS core measure performance, they’ve earned important marketing and clinical leverage. Stacy Goldsholl, MD, of Wilmington, N.C.-based TeamHealth, estimates that more than 70% of TeamHealth’s potential clients now ask for her firm’s data on CMS core measures.

The Hospitalist’s Measures

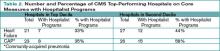

To explore the relationship between a hospital’s position in the top two deciles on core measures with its hospitalist program, The Hospitalist extrapolated data from the CMS/Premier Hospital Quality Incentive Demonstration Project (www.premierinc.com/quality-safety) and SHM’s membership list, a proxy for a comprehensive list of hospitalist programs. All 44 hospitals in the top two deciles for heart failure core measures and the 49 hospitals listed for CAP were checked against the SHM list, as were their Web sites, for mention of a hospitalist program.

We compared data on two of the five core measures: heart failure and CAP. We expected a higher correlation for CAP because hospitalists are more likely to have primary inpatient responsibility for CAP patients than they are for heart failure patients, for whom cardiologists often have primary accountability. Here are the results of The Hospitalist’s analysis.

Hospitals in the top 10% for heart failure core measures are St. Helena Hospital (Calif.), Homestead Hospital (Fla.), Kootenai Medical Center (Idaho), Mission Hospital (N.C.), Staten Island University Hospital (N.Y.), Harris Methodist Fort Worth (Texas), and Harris Methodist Southwest (Texas).

Top decile performers in CAP are Methodist Medical Center of Illinois (Ill.), St. Vincent Memorial Hospital (Ill.), Heartland Regional Medical Center (Mo.), Stanly Memorial Hospital (N.C.), Alegent Health Immanuel Medical Center (Neb.), Staten Island University Hospital (N.Y.), Presbyterian Hospital of Kaufman (Texas), and Aurora Medical Center (Wis.).

Hospitals with some form of hospitalist program performed well, particularly on the second decile of the two core measures The Hospitalist examined: heart failure (44%) and community-acquired pneumonia (58%).

Complex Issues

Hospitalists expressed varied reactions to The Hospitalist’s correlation between a hospital’s core measures performance and its use of hospitalists. Adam Singer, MD, CEO of IPC-The Hospitalist Company of North Hollywood, Calif., scanned The Hospitalist’s lists and found a number of top-performing hospitals on its roster, including Methodist Forth Worth and Methodist Southwest in Texas.

According to Dr. Singer, none of IPC’s 105 hospitalist practices tie core measures to the group’s incentives, and prospective clients haven’t made core measures a top priority. Still, the firm has invested substantially in information technology (IT) infrastructure for hospitalists to track core measures compliance and other clinical indicators.